procedural harmony

Introduction

Thanks to a bunch of centuries of music history, we now have a large set of rules for writing music, they are sometimes so precise that it can be tempting to ask a computer to follow them for you.

I've been curious about the results and difficulties of such a task so I've been trying a few times to program an automated composer/arranger.

My latest attempt is currently a 500 lines long java thing filled with missing features and todo lists (that might never be completed) but it can generate some interesting results already so I'm going to say some words about it as a possible postmortem.

In short, the goal of this project is to let the user define a set of parameters or constraints (such as a set of instruments, a given melody, bass line or anything in-between) and then generate a piece of music (just the midi score, not the actual sounds) by letting the software fill the blanks.

Parameters

Here is the list of customizable parameters :

- number of beats in the song

this is currently defining the number of successive chords

- number of instruments

each instrument can be assigned a lowest and highest playable note, and maximum interval from it and the voice just below it, this is defaulted to the values for a typical string quartet

- preexisting notes

any note at any beat for any instrument can be defined, no matter what happens next the software will always keep those as requested

There is also a set of more experimental parameters that the user is allowed to tweak anyway :

- number of all possible notes

the default value here is 127, which makes sense for a MIDI export

- number of notes in an octave

note that some compositional behaviors such as avoiding specific intervals will always be aligned to the official number of chromatic semi-tones in that interval even though the acutal definition of a semi-tone would change depending on the number of notes in an octave. The best way to fix this would probably be to define intervals as rounded proportions of an octave.

- number and importance of degrees

other things being equal, the software will always favor one degree over another ; as an arguable degrees list (from best to worst) I settled for : I V IV II VI III VII

- known modes

while evaluating possible scales, the software is currently going through major and harmonic minor which seems quite restrictive, I'm going to say a few words about this later

For now I'm assuming the score is just a series of chords in which each instrument plays one note.

In order to handle various durations and more complex input melodies, I planned to :

- somehow define the ideal time subdivision of one beat (= number of notes contained in the same beat/chord)

- extract for each beat a list of models in which each predefined note was labeled as real part of the chord or not

- then choose the most relevant chord when defining the scales

- wait for the last part of the generation to re-inject the rejected notes along with other ornamentations

I haven't done any of these at the moment and am just working with plain chords where each note has the same duration (for longer chords I just duplicate them).

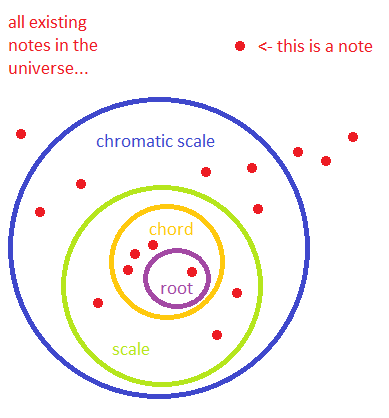

Tonality

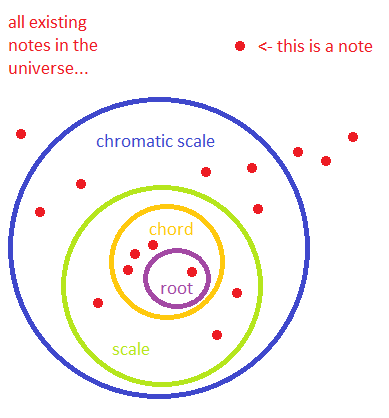

We're going to speak about scales, chords, notes, and some of these concepts imply each other so let's start with a picture of how things can be seen :

These are various "pools" of notes (the actual octave of the note doesn't matter).

Number of notes in a chord or scale may vary ; scales we're going to look at are using 7 notes ; there are 12 notes in the chromatic scale.

There are many notes in the observable universe.

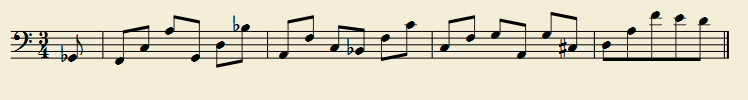

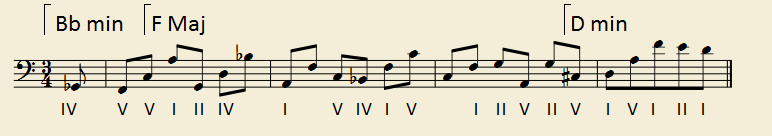

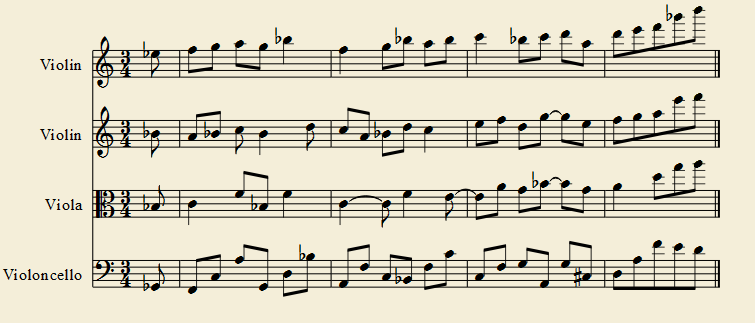

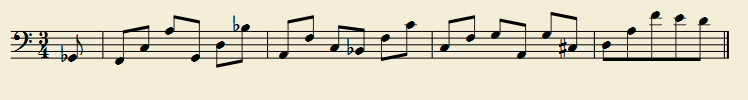

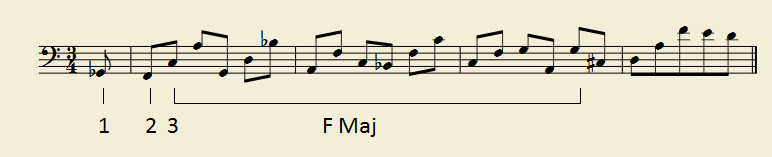

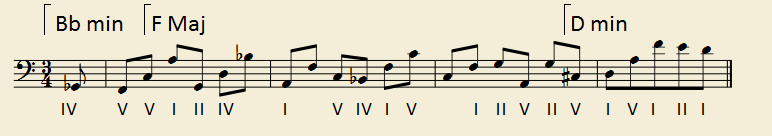

Let's consider this simple bass line that will serve as an example as I go through each step. listen

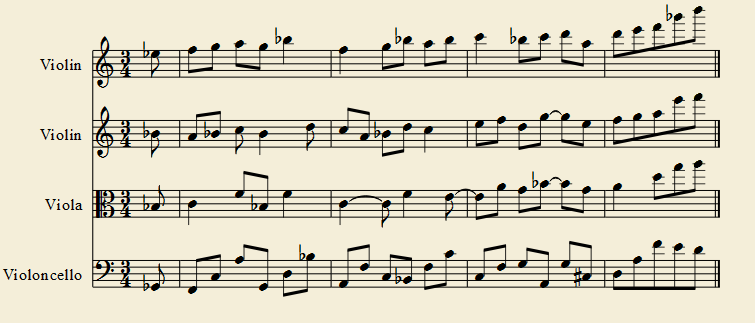

We're going to ask the algorithm to compose a few bars of music for a string quartet where this part should be played by the cello.

The first thing that is being defined is scales.

In the beginning all chords are assigned an "undefined" scale.

The algorithm will go through all scales, for each of them it will try to find the longest run (I mean the longest number of consecutive chords in the song where all predefined notes fit with the scale).

Then the algorithm will pick the scale with the longest run, apply it to the chords (replacing its "undefined" state) and then restart the operation on the remaining undefined chords until all of them are assigned a scale.

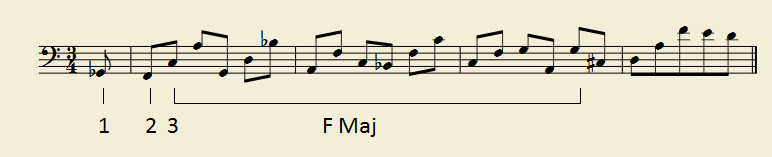

For instance here, the longest sequence found is F major in the middle of the tune, starting from the note labeled (3).

Most people would probably have started F major on the very first note (1) with some kind of altered Vth degree, but the algorithm doesn't know about those yet.

The second note (2) seems to be allowed in the F major part, but the algorithm prefers to wait for a potential Vth for the scale to change.

In the case of two equally long top runs, the one that allows for more usual degrees will be picked.

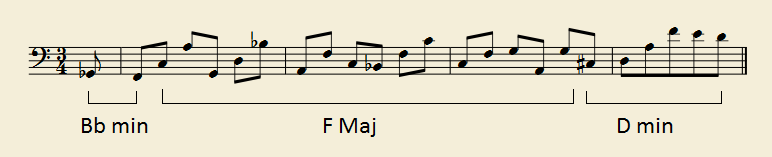

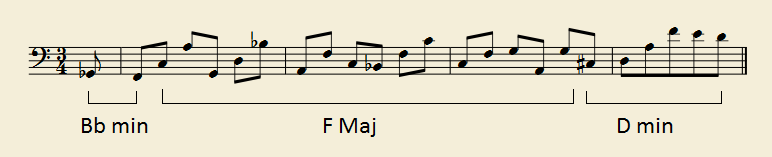

Here is what the algorithm settled to in the end.

Here should be the part where the algorithm tries to add predefined (or preferred) notes on its own in order to produce repeating pattern or contrapuntal structures as long as they fit with the scales.

That's one of the exciting extensions I hope I'll be able to experiment, but nothing about it is done at the moment.

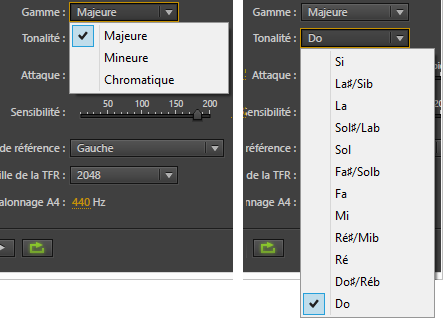

Sidenote : Speaking of modes, I've noticed everybody seems to disagree on what a convenient interface should be when it comes to defining tonality.

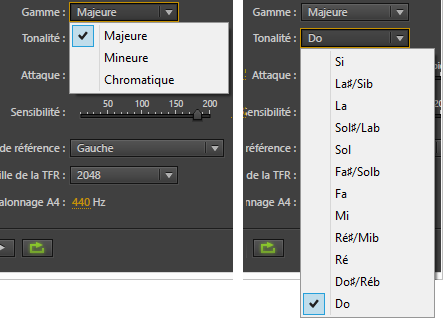

For instance here it what the Adobe Audition auto-pitcher looks like (in its weird French machine-translated version) :

Apart from the only three choices (minor/major/chromatic) being restrictive and "minor" being arguably ambiguous, an autotune process should not have to care much whether a given note is the root for a mode, but just if it is present in it.

The way I like to do it is to store the root note and the presence/absence of each note in a scale (with no relation with the root note, although it would not make sense to not mark it as present).

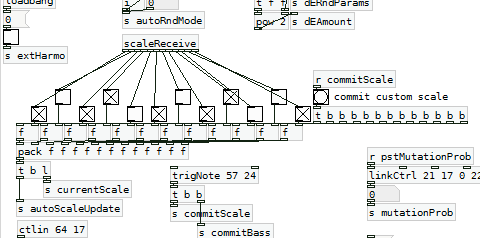

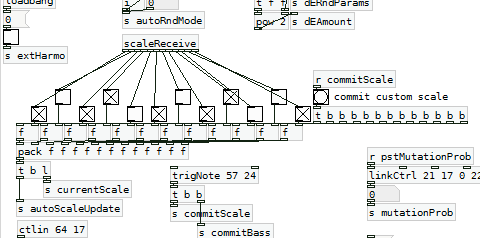

For instance this capture from an unrelated pure data project shows all 12 toggles disposed like a piano keyboard :

If you're making something involving a user-defined scale, please consider doing something like this rather than a list.

Assuming we're talking about chromatic tempered scale, that would give us 12 booleans that can group up to form an integer so that each mode is represented by a number.

Here is the list of numeric equivalents for each scale (M stands for major and m for harmonic minor) :

2741 = C M

1387 = C#M

2774 = D M

1453 = D#M

2906 = E M

1717 = F M

3434 = F#M

2773 = G M

1451 = G#M

2902 = A M

1709 = A#M

3418 = B M

2477 = C m

0859 = C#m

1718 = D m

3436 = D#m

2777 = E m

1459 = F m

2918 = F#m

1741 = G m

3482 = G#m

2869 = A m

1643 = A#m

3286 = B m

Other scale numbers can be found using this table.

So even if I'm currently only using two modes, adding more scales with various number of notes would just mean adding more integers and should not be a problem.

(for some reason scales in my source code are being called "tonalities" because of confusion with the French language)

Degrees

Then each beat will be assigned a degree (in short : on the colored circle graph at the top of this page, a degree defines which notes will be picked from the scale in order to form a chord).

Out of all possible degrees allowed by the predefined chord notes, one will be picked by looking up the first place in some arbitrary table (see "importance of degrees" in parameters).

The Vth degree will be favored if the scale is changing on this beat.

There could probably be some room there for a lot more rules to mimic common musical practices (favor common chains such as II->V->I, ban degrees if we know they are going to produce specific intervals at forbidden places, force degree changes where the melody seems to be too repetitive...).

Here a human would probably want to only group notes by 3 but as I previously said this algorithm thinks that each note is a potential new chord and does not care about rhythm.

Assigning notes to voices

Now it's time to define some actual notes that are going to be played by the instruments.

In short, the method I'm using is to :

1- consider every possible note for every instrument and every beat

2- then trim the problematic ones

3- then out of the remaining possibilities pick the one that seems to be the best based on various criteria

Let's take a closer look at each of these three steps.

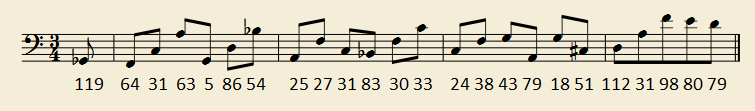

1) Generating every possible combination of notes for each beat.

As usual with exponential stuff... Even for only four instruments, that task would actually be much too cpu consuming and take a very long time.

So, using the instrument parameters, the results are already being trimmed there to :

- notes that the instrument will actually be able to play

- notes that are close enough from their neighbor voices

- notes that fit with user-predefined melodies

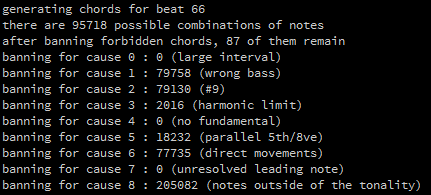

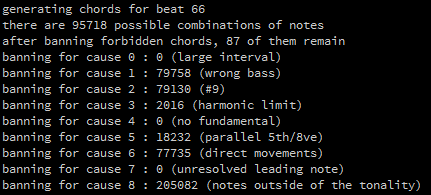

2) Banning forbidden chords.

Here is a list of reasons why a given chord can be banned for the list of possibilities :

- an interval between two voices is too large

- the bass is inconsistent with the chosen degree (I currently only allow fundamental state or first drop)

- there is a minor 9th or extended drop of it somewhere

- if a low pitched note doesn't have a strong harmonic relation with the bass

- the fundamental is not present in the chord

- if notes doesn't belong to the scale (but I deliberately allow those who belong to the scale and not the chord)

And here is a list of banning criteria that depend on a chord's relation with the previous chord :

- there are consecutive 5ths or 8ves.

- there is a direct movement in a context that doesn't permit it (depends on the voice, the degree, and the melodic contour)

- if a top leading tone doesn't lead to a tonic

Unlike the process of assigning scales, these three steps are applied on each chord linearly from start to end, meaning the relation of a chord with its neighbors is always done from an undefined one to the defined previous one.

From a distance, I think I should have programmed it from end to start instead since musical construction tends to work better that way.

I suppose it could be possible to apply step 2 to all chords first and evaluate all possible combinations before moving on to the next step and settling for final chords, but I had a feeling this would again make the process longer and a lot more complex to only provide subtle improvements.

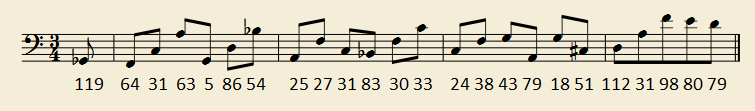

The number below each note represents the number of possible chord structures that are at this point eligible for the next step.

So far the algorithm will try to find chords with one note for each voice. If it doesn't find any good one, it will mute every voice except those with a predefined note.

I could ideally make it look for chords where only problematic voices are muted (and just in general silence is supposed to play a large role in the song structure, so it could take advantage of it).

3) Assigning scores to the remaining possibilities.

This part is really WIP and subjective but here are some of the things I'm taking into account :

- whether the notes are common for this chord or not

- if notes (especially wrong notes) are duplicated between voices

- if the melodic contour is smooth

- if the drop is evolving on a constant degree

- if leading tones lead to tonics

- if intervals between voices are large enough

- if the interval at the top is too small

Other things I was planning to take into account eventually :

- if there are 3rd and 7th in the chord, especially on a Vth degree

- presence of notes that haven't been heard for a while in the song

- position of the bass note

- favor inverse melodic contours between voices

Since all notes from the tonality are allowed, duplicates are avoided and big intervals are preferred as long as they don't exceed a given maximum (usually one octave, a bit more between the bass and the tenor) the process often ends up with chords like this with many 7th between voices. They sound slightly odd but I'm ok with them.

The final result : listen

At this point should be the step where the software puts back time subdivisions and add

nonchord notes.

I haven't worked on that part at all yet.

Tweaking

The banning process (2) is fairly simple (though it would make sense to be more flexible there too since it sometimes refuses all solutions for a chord).

At some point I had to print the number of times chords were banned for each possible reason, to be sure that none of them were abusively restrictive.

The scoring process on the other hand is a lot more subjective. Some properties raise or lower the score by a given value for each chord, but I wasn't sure which property would matter most and at which rate each of them would occur.

In order to tweak the values, I had to take existing melodies, generate a score out of them and track where the algorithm wrote something that I wouldn't allow myself (or the opposite case). I would then modify the score modificators accordingly.

Taking a random popular melody and ask the algorithm to make an arrangement of it usually leads to funny results because it rarely picks the solution your ears are used to.

For instance, here is how a human (me in this case) could possibly arrange "Someday my prince will come" for four voices : listen

And now here is how my algorithm thinks it should sound : listen

Let's do the same for "Giant steps", here are the original chords : listen

And the generated version : listen

These examples above use a first voice melody as an input, here is a result where an intermediary voice is fed with a randomized melody, for some reason those usually sound bland listen

Result

The processing applet will print the result to the console like this :

D#4 | F 4 | G 4 | A 4 | G 4 | A#4 | A#4 | F 4 | F 4 | G 4 | A#4 | A 4 | A#4 | C 5 | C 5 | A#4 | C 5 | D 5 | A 4 | D 5 | E 5 | F 5 | A#5 | D 6 | (voice 1 )

A#3 | A 3 | A#3 | C 4 | A#3 | A#3 | D 4 | C 4 | A 3 | A#3 | D 4 | C 4 | C 4 | E 4 | F 4 | D 4 | G 4 | G 4 | E 4 | F 4 | G 4 | A 4 | E 5 | F 5 | (voice 2 )

A#2 | C 3 | C 3 | F 3 | A#2 | F 3 | F 3 | C 3 | C 3 | C 3 | F 3 | F 3 | E 3 | E 3 | A 3 | G 3 | A#3 | A#3 | G 3 | A 3 | A 3 | D 4 | G 4 | A 4 | (voice 3 )

F#1 | F 1 | C 2 | A 2 | G 1 | D 2 | A#2 | A 1 | F 2 | C 2 | A#1 | F 2 | C 3 | C 2 | F 2 | G 2 | E 2 | G 2 | C#2 | D 2 | A 2 | F 3 | E 3 | D 3 | (voice 4 )

A#m | A#m | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | F M | D m | D m | D m | D m | D m | D m | ( scales )

004 | 005 | 005 | 001 | 002 | 004 | 004 | 001 | 001 | 005 | 004 | 001 | 005 | 005 | 001 | 002 | 005 | 002 | 005 | 001 | 005 | 001 | 002 | 001 | (degrees )

And can also export it as a MIDI file thanks to this thing.

The website seems to be dead, so here is the code.

Now here is a 1+ hour long gorgeous 6-voices organ piece, made by this algorithm based on wild randomness, enjoy : listen

(I've received some comments about this, so : I'm not claiming you can make a good song by simply expanding on a random melody and that's not even the goal of all this, it is just a good benchmark process ; if the tool can be good at harmonizing random notes it probably will be as good with consistent melodies)

I'm pretty sure there are other tools with a similar approach such as this one, I haven't heard of many though.

Most of the generative music principles I've seen so far are either based on constant scales, ignoring the common features of tonality, or experimental and far from the usual music theory. So if you know about similar projects, please tell me about it.

gitHub page for this project : https://github.com/Morusque/harmonyGenerator